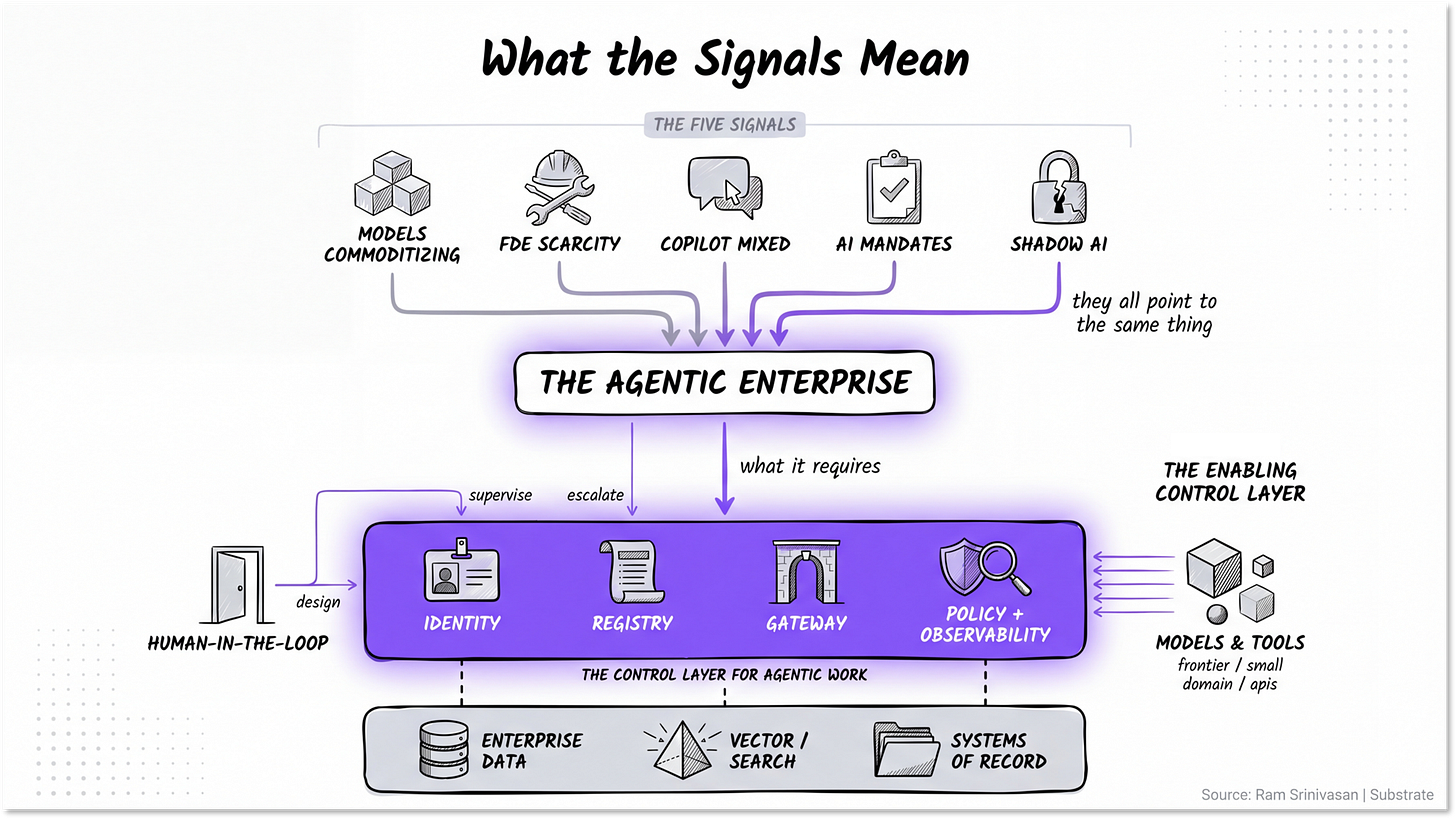

Five signals from the agentic enterprise. And what they mean.

Substrate 01: Intelligence Infrastructure

Over the past month I have had a version of the same conversation with maybe twenty senior leaders. The titles vary. The company sizes vary. The industries vary. The questions do not.

We have Copilot. But my team wants Claude or Cowork. Are they secretly using it? I need to show results this quarter. Where do I even begin? Vendors, consultants, and my team all say three different things. Who do I believe? I am being asked to innovate and cut costs. What do I prioritize? I don’t want agents without governance. Can I even trust them with my financials?

These look like tactical questions. They are not.

Every one of them is a symptom of the same underlying market shift, and once you can see the shift, the questions stop sounding like five separate problems and start sounding like the same one.

Let’s unpack the signals.

Signal 1: The model layer is commoditizing

Frontier labs are now hosted on every hyperscaler, and the hyperscalers are hosting every frontier model. Anthropic runs on AWS, on Google Cloud, and inside Microsoft Foundry. Claude is available natively inside Gemini Enterprise alongside Gemini and Llama. Oracle hosts everything.

The frontier labs have stopped pretending they will pick one cloud, and the hyperscalers have stopped pretending they will pick one model. That convergence has a meaning == the model is no longer the moat. Capability is commoditizing into a substrate, intelligence is becoming infrastructure and the competition has moved upstack.

Signal 2: FDEs are the scarcest resource in the market

Every consulting firm, hyperscaler, and frontier lab is racing to hire forward-deployed engineers (FDEs). Accenture, Bain, BCG, Capgemini, Deloitte, and McKinsey are striking FDE arrangements with frontier labs. Anthropic, OpenAI, and Google have all built FDE benches.

This hiring pattern is the market telling you implementation is the bottleneck, not capability. The gap between what an agent can do in a demo and what an agent can do reliably with real data is enormous, and the people who can close it are scarce.

The product form of this shift is the headless agent: agents that run as background workers, without a chat interface, doing work end to end. Think invoice reconciliation, contract review, support triage, sales follow-up, onboarding, variance analysis, and compliance monitoring. That is where production agentic AI actually lives.

Signal 3: Copilot is getting mixed reviews, but …

Microsoft Copilot is the AI most knowledge workers actually touch. That makes it the most important enterprise AI deployment in the market and also the easiest one to criticize.

Adoption is uneven. Outcomes are uneven. The reviews are mixed.

But the honest read is more interesting than “Copilot is failing.” Accenture just committed to rolling Copilot out to 740,000+ people. That is not what a loss of confidence looks like. The real issue is structural. Many enterprises bought seats before they redesigned work. They gave employees access to AI and expected the operating model to change on its own. It didn’t.

Some of the disappointment is also a configuration problem. The full Microsoft AI experience depends on license tier, data readiness, workflow integration, security posture, and whether the organization has actually trained people to use the tools inside real work.

The signal is not Microsoft collapse. The signal is that broad deployment is not the same as transformation. Seat activation gets you distribution. It does not get you redesigned workflows, trusted data, scoped permissions, or measurable business outcomes.

Signal 4: Mandates result in tokenmaxxing

Leadership teams that cannot drive AI adoption organically are starting to mandate it. Many are now tracking weekly AI logins for senior promotion decisions. The intent is rational, the mechanism could be better.

When you mandate, employees gaming the metric becomes the rational response. The market is calling this tokenmaxxing: performative usage that produces the metric without producing the outcome. A senior manager logs in seven times, asks the tool to summarize a lengthy PDF, and the dashboard turns green. Nothing about the actual work has changed. You cannot mandate your way past a tool that does not yet deserve trust. The leading group is investing in the substrate that will earn trust, not in the dashboard that measures its absence.

Signal 5: Shadow AI is a demand signal, not just risk

Employees are using ChatGPT, Claude, and Gemini personal accounts (shadow AI) on company work in numbers most CISOs do not want to admit. The sanctioned tools are not good enough, so workers are routing around them.

Most CISOs read this as pure risk and try to suppress it. The leading group reads it as the most valuable demand signal in the enterprise. Where employees go on their own credit cards tells you exactly which workflows are ready for governed agentic capability and exactly where your sanctioned stack is failing them. The energy is real. It can be channeled into governed infrastructure or driven further underground. The companies that channel it win.

Look back at the type of questions every leader is asking. The Copilot question is shadow AI demand signal in disguise. The where-do-I-begin question is the time-versus-substrate mismatch. The vendor noise question is the model layer commoditizing and every player repositioning. The innovate-versus-cut question is what happens when mandates fill the gap that trust has not yet earned. The governance question is the actor-in-your-company problem nobody has built the operating model for yet.

So, what does an enterprise need to navigate this?

To operate in a market shaped by these five signals, you need to solve for five workstreams. They are connected, they move together, and most enterprises are still scoping the first one with no view of the last three.

Knowledge architecture. Where the data lives, who can see it, and how agents reach across the systems that have been quietly accumulating for a decade. An agent is only as good as the context it can ground in.

Identity and authorization. What each agent is allowed to do, on whose behalf, with what approval. The harder version is a policy question Legal, Compliance, Security, Risk, and HR have to answer together.

Workflow redesign. Which processes change when multi-step work can be delegated. The first hundred agents automate tasks. The next thousand change how jobs are defined. The ten thousand after that redraw the org chart.

Accountability. Who owns the fleet, the spend, the audit trail, the outcome. The enterprises that get this right will build the agentic equivalent of what Site Reliability Engineering became for cloud infrastructure.

Change management. How employees learn to trust agents without over-trusting them. This is the workstream that gets the smallest budget and turns out to be the binding constraint.

These five workstreams are an HR program, a finance program, a legal program, and an operations program, dressed in technology vocabulary.

That is consistent with IBM’s 2026 CEO study, which found that organizations that redesigned five core business areas (technology, finance, HR, operations, and cross-functional collaboration) are 4X more likely to deliver on their business objectives.

Once you see those workstreams clearly, the platform race also becomes easier to understand. The most important vendors are not only competing on models or assistants. They are competing to become the governed substrate for agentic work.

What the production substrate looks like

This is where the market is moving: from models and copilots to governed agentic infrastructure.

The production question is no longer just “which model is best?” or “which assistant has the best UX?” It is “which platform can run agents against enterprise data, with identity, permissions, memory, tools, audit trails, policy enforcement, and human escalation built in?”

That is the substrate race.

Google’s latest enterprise AI architecture is one of the clearest examples of this direction. Gemini Enterprise is not being positioned as a chatbot wrapper around Gemini. It is being positioned as an operating layer for agentic work: model access, enterprise search, workflow automation, agent creation, governance, and security in one environment.

The important part is not only the model. It is the control plane around the model.

Agent Identity answers: who or what is this agent acting as?

Agent Registry answers: which agents exist, who owns them, what are they allowed to do, and where are they deployed?

Agent Gateway answers: how do agents connect to tools, APIs, and enterprise systems without becoming a security problem?

Model Armor answers: how do you enforce policy, filter unsafe behavior, and reduce exposure to prompt injection and data leakage?

This does not mean Google wins. Microsoft has the workflow surface. AWS has the infrastructure surface. Anthropic and OpenAI have model and developer mindshare. Salesforce, ServiceNow, Workday, and others have deep process and system-of-record positions.

Each of these players has real strength. But Google is the first to ship the substrate as an integrated product, not as a portfolio of pieces. That is the move worth watching.

The shape of the market is becoming clear. Every serious enterprise AI platform is being pulled toward the same thing: a governed substrate where agents can access data, take action, and be supervised.

That is a different market from “which chatbot should we buy?”

The test

Sit your enterprise leaders down with the heads of your three largest functions. Walk through one core process in a fully agentic version of the company.

Which agents are involved?

What data do they need?

Who approves their permissions?

Where is the audit trail?

Who owns the IP on agents built by vendors on your systems?

What happens when an agent produces the wrong outcome?

Who owns the cost?

Who has the authority to shut it down?

If the room cannot answer those questions together, your platform is not the limiting factor.

The operating model is.

Every senior leader I have spoken with this month has felt the gap between what their AI investment is producing and what the market keeps telling them is possible. The gap is real. It is not a technology gap. It is the operating model the agentic enterprise needs, and that work is yours to do.

Until next time,

Ram